Three years ago, the world was emerging from a bruising period. The S&P 500 was down 15%. The spectre of Covid still hung over everything. There was a tentative sense that we might be turning a corner, but optimism was in short supply.

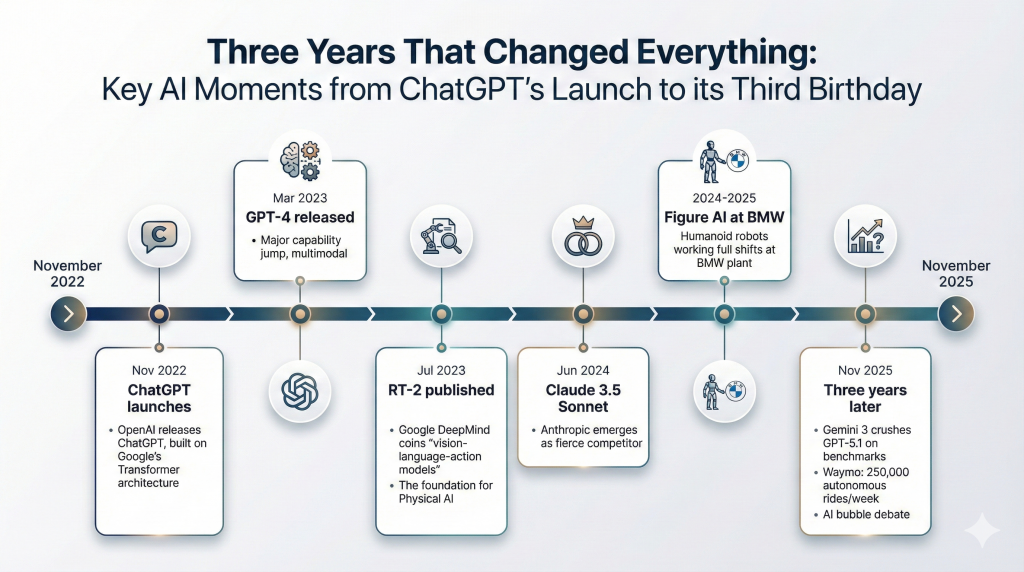

Then, on 30 November 2022, OpenAI released ChatGPT, built on the Transformer architecture that Google had invented and published in 2017, but not yet turned into a product.

Today, three years later, markets are up 16% year-to-date. There is a sense of exuberance. We’re debating whether we’re in an AI bubble. That optimism traces back to a single moment, precisely three years ago, which is why marking ChatGPT’s third birthday feels significant.

A few days after ChatGPT’s release, I wrote on LinkedIn that “the world changed forever”. The comments were instructive. “Do I understand correctly that ChatGPT only understands English?” asked one sceptic. “Then I don’t see it as a worldwide game changer.” Another dismissed it outright: “It can’t reason, ‘only’ parrot what is already known information.” A third found it couldn’t maintain a conversation: “It still couldn’t elaborate when I asked a follow-up question.”

These weren’t unreasonable concerns. ChatGPT was text-only, English-focused, couldn’t browse the internet, and made up plausible-sounding nonsense. The sceptics were asking whether a system limited to language – and struggling even at that – could really matter.

Three years on, that question has been answered decisively.

Mastering Language

Models still hallucinate, but far less often, and with better self-correction. The rest has transformed beyond recognition. ChatGPT now works fluently in over 100 languages. Reasoning models think step-by-step through PhD-level problems. Follow-up questions? Table stakes.

The benchmarks tell one story: real-world coding tasks up from 48% to 81%, novel reasoning tests from 5% to 88%. But the lived experience tells another. In November 2022, I asked ChatGPT to help analyse company financials. It provided confident nonsense with made-up numbers. Today, Claude extracts the metrics, compares them to benchmarks, identifies gaps and drafts follow-up emails. That’s not an incremental improvement. That’s a different category of tool.

The impact on how we work has been immediate. Three years ago, no developer used AI coding assistants. Today, the vast majority do. Google reports that more than a quarter of its new code is AI-generated. For me, the shift was personal: I used ChatGPT frequently, but it was Claude – and then Claude Code – that made AI indispensable. The difference between a capable tool and an essential collaborator.

OpenAI’s launch caught Google flat-footed. All eight authors of Google’s original Transformer paper eventually left the company. But OpenAI soon got fierce competition from Anthropic, founded in 2021 by former OpenAI researchers. When ChatGPT launched, Anthropic was barely a year old. Today, Anthropic holds 32% of the enterprise market. Google’s newly released Gemini 3 is formidable, crushing OpenAI’s GPT-5.1 on most benchmarks. And given the gap between revenue growth and planned investments, HSBC estimates OpenAI needs a further $207 billion of investment before the company breaks even. OpenAI had a head start, and they are still the “one to beat”. But the revolution OpenAI started is far from won.

And as for the sceptics who questioned whether ChatGPT was a “worldwide game changer”? They might have been asking the right question. But they got the wrong timescale.

Beyond Language

Here’s what three years of progress revealed: linguistic intelligence has limits.

Think about how human intelligence actually develops. A child spends years grasping objects, navigating rooms, learning that dropped things fall and hot things burn, all before speaking a single word. Our minds are built on that foundation of sensing and acting. Language comes later, layered on top.

LLMs skipped that foundation entirely. They learned everything from text, never touching anything. As Stanford’s Fei-Fei Li puts it, they remain “wordsmiths in the dark; eloquent but inexperienced, knowledgeable but ungrounded.” They can discuss physics brilliantly. They cannot catch a ball.

This is the next frontier: AI that understands reality the way we do. Not by reading about the world, but by sensing it, moving through it, acting within it.

The Convergence

Physical AI is unlocking now because three strands of technology are finally converging.

We’ve had physics simulation for decades: game engines, industrial simulators, digital twins. We’ve had real-world robotics, collecting data one painstaking interaction at a time. And we’ve had machine learning, pattern-matching on whatever data was available. Each existed in isolation. None was sufficient alone.

The breakthrough is their synthesis. GPU-accelerated physics engines now run 100 times faster than before, generating vast amounts of training data in simulation. Foundation models pre-trained on internet-scale data provide the generalisation that narrow robotics models lacked. And techniques like automatic domain randomisation – varying friction, lighting, object properties randomly in simulation – create policies robust enough to transfer to the real world.

In July 2023, Google DeepMind published RT–2 and gave the approach a name: vision-language-action models. Systems that see, understand instructions, and act. All integrated. Tell a robot “fold the towel” versus “stack the dishes”, and it figures out the difference. No explicit programming. No predefined steps. It learns patterns and handles situations it has never encountered.

Jensen Huang frames it simply: “Just as large language models revolutionised generative AI, world foundation models are the breakthrough for physical AI.”

This is why we’re at an inflexion point. Not a single invention, but a convergence: simulation, foundation models, and real-world deployment finally working together.

We are still in the foothills. Current models succeed on basic manipulation (for example, picking up an object) only some of the time. And the margin for error in the physical world is unforgiving: a dropped component breaks, a wrong movement damages equipment. But the trajectory is unmistakable. Figure AI’s humanoid robots now work full shifts at BMW’s Spartanburg plant, trained largely in simulation; deployed in reality. As Figure’s Brett Adcock puts it: “Eventually, physical labour will be optional.”

Not Just Robots

This is not just about robots. Spatial intelligence means AI that can optimise a factory floor, manage energy flows across a grid, or coordinate construction equipment across a site. It means systems that understand physical constraints the way current models understand grammar.

Waymo is already there. 250,000 autonomous rides per week in the US, with London launching in 2026. No safety driver. No remote operator. Just AI that understands how to navigate the physical world.

Hive Autonomy enables single operators to command fleets of autonomous construction equipment: diggers and haulers responding to intent, not explicit instructions. That’s not a research demo. That’s operating now.

The Work Question

The work question looms. If AI can write, code, analyse, and now act in the physical world, what’s left for humans to do?

The surface concern is compelling. But there’s a deeper pattern worth understanding. The biologist Stuart Kauffman calls it the “adjacent possible”: each problem we solve expands the frontier of what becomes possible next. The web-enabled social media. Social media enabled the creator economy. Each solved problem reveals problems that couldn’t even be formulated before.

Physicist David Deutsch makes the philosophical case: we are not approaching the end of useful work. We are at the beginning of infinity. The space of worthwhile problems expands faster than our capacity to solve them. Climate. Energy. Healthcare. Ageing. Space. Consciousness itself. The list doesn’t shrink as we progress. It grows.

The transition won’t be painless. Technology is advancing faster than institutions can adapt, and the short-term disruption will be real. But human ingenuity has never failed to find work worth doing. There’s no reason to believe this time is different, and a universe of reasons to believe the opportunities ahead are extraordinary.

Three Years Hence

Three years ago, the question was, “is this actually useful?” The sceptics had reasonable concerns about a system that only spoke English and couldn’t hold a conversation.

Those concerns fell faster than anyone predicted. Roy Amara observed that we overestimate technology in the short run and underestimate it in the long run. ChatGPT inverted that law. The sceptics underestimated the short run. And the long run? We are only just getting started.

Happy birthday, ChatGPT. You proved machines could master language. The question now is whether they can master reality itself.

Give it three years.